Self-Supervised 3D Semantic Occupancy Prediction from Multi-View 2D Surround Images

| Team: | S. Abualhanud, M. Mehltretter |

| Year: | 2024 |

| Duration: | Since 2024 |

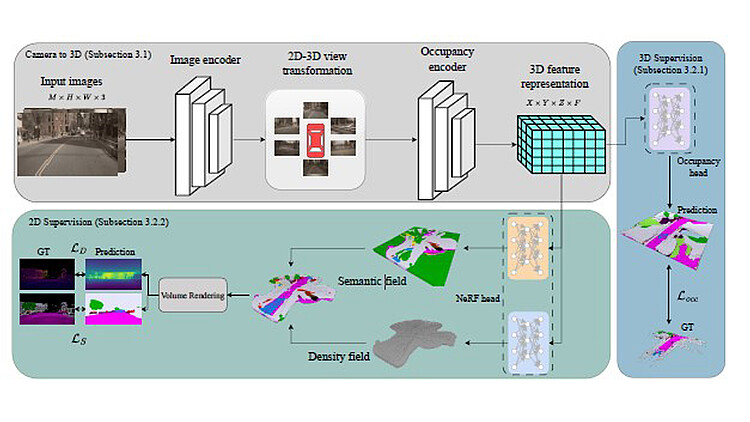

An accurate 3D representation of the geometry and semantics of an environment builds the basis for a large variety of downstream tasks and is essential for autonomous driving related tasks such as path planning and obstacle avoidance. The focus of this work is put on 3D semantic occupancy prediction, i.e., the reconstruction of a scene as a voxel grid where each voxel is assigned both an occupancy and a semantic label. We present a Convolutional Neural Network-based method that utilizes multiple colour images from a surround-view setup with minimal overlap, together with the associated interior and exterior camera parameters as input, to reconstruct the observed environment as a 3D semantic occupancy map. To account for the ill-posed nature of reconstructing a 3D representation from monocular 2D images, the image information is integrated over time: Under the assumption that the camera setup is moving, images from consecutive time steps are used to form a multi-view stereo setup. In exhaustive experiments, we investigate the challenges presented by dynamic objects and the possibilities of training the proposed method with either 3D or 2D reference data. Latter being motivated by the comparably higher costs of generating and annotating 3D ground truth data. Moreover, we present and investigate a novel self-supervised training scheme that does not require any geometric reference data, but only relies on sparse semantic ground truth. An evaluation on the Occ3D dataset demonstrates a significant improvement of our self-supervised variant compared to current state-of-the-art self-supervised methods from the literature.